Moiré in z-axis

Monday, April 22nd, 2013I shoot video and take pictures of screen-based interfaces quite often and Moiré patterns, despite anti-aliasing filters, are very present. I found out that fashion photographers encounter the same issue when taking close-ups of garments. The Moiré effect happens when two or more grids are superposed. A grid can be the interweave of fabric, the array of a digital camera sensor, the pixels in a screen, etc.

The relative movement between the grids create dynamic Moiré patterns. For instance, this effect is apparent when zooming in and out pictures of a screen taken with a phone camera.

(it seems that the Moiré is trying to mimic the wood pattern :)

(it seems that the Moiré is trying to mimic the wood pattern :)

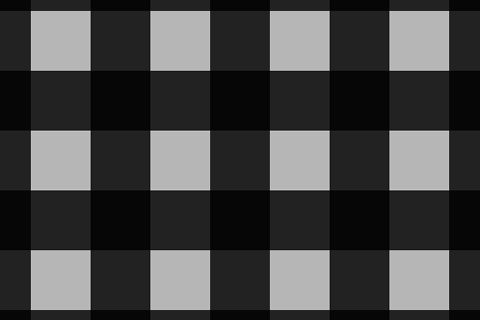

I tried to simulate those Moiré patterns from grids moving relatively in depth one from the other as it happens in the example above, as opposite to the traditional patterns generated by grids moving on the same plane. I used the pixels of my laptop screen as a first grid, and an image I created as the second grid, consisting of a grid of 1 pixel black lines on a white background (or a matrix of white pixels on a black background):

Using Processing I created a sequence of images zooming the pattern above from 0% to 200%, in 960 steps. The superposed grids (pattern from the image and pixels from the screen) rendered in a series of Moiré patterns that repeat sequentially:

Using Processing I created a sequence of images zooming the pattern above from 0% to 200%, in 960 steps. The superposed grids (pattern from the image and pixels from the screen) rendered in a series of Moiré patterns that repeat sequentially:

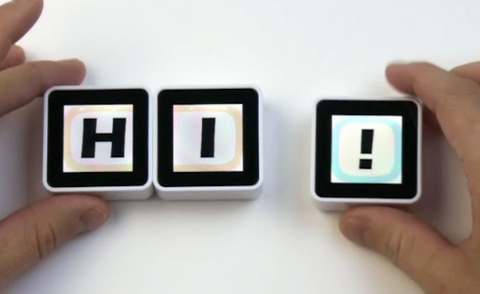

– 30%

– 25% (same as 50%, 16.67%, 12.5%, 10%, …)

– 25% (same as 50%, 16.67%, 12.5%, 10%, …)

– 33% (same as 100%, 20%, …)

– 33% (same as 100%, 20%, …)

– 40% (same as 200%, 66.67%, 13%, …)

– 40% (same as 200%, 66.67%, 13%, …)

– 75% (same as 150%, 18.75%, 7.5%, …)

– 75% (same as 150%, 18.75%, 7.5%, …)

And some interesting in-betweens:

And some interesting in-betweens:

(you can perceive another dynamic Moiré effect in some if the patterns above when scrolling up and down this page)

These are some of the patterns above at 32x:

I made a video of the sequence. Due to the video compression the patterns are not shown properly – I suggest to download it to appreciate the sharpness of the patterns.

Taking any of the images above as seed for the zoom in sequence generate similar patterns.

I’ll try to post more experiments on Moiré patterns – I’m specially interested on the variations of colour when one of the grids has a coloured structure, such as screens (rgb). Here three pictures of the same image on a screen: (1) unfocused (to avoid Moiré patterns, natural perceived tone, grey) and near the optimal focus with a different focal distance, with (2) green and (3) red prevalence:

Some references about Moiré patterns:

– Illustrations and maths regarding the Moiré effect.

– A book containing an exhaustive study of Moiré patterns.

– Another book about grids from the same author.